In the

previous post, we had a look at the Performance Schema tables to monitor MySQL replication. We discussed why these were needed, what advantages they have to offer and what each table stands for. We also did a small exercise to see how one can select a particular table given the data that is needed. We will now dive deeper and look at the individual tables to understand what fields they are made of. We will also go through different scenarios to see how the different pieces of data can be used.

Note that these tables report the status/configuration of a replication slave. So, these are empty at the master server or at a standalone server. All the SELECTs we will talk of, are done at the slave. Lets take the tables one by one and go through them.

REPLICATION_CONNECTION_CONFIGURATION:

This table shows the configuration parameters used by the slave server for connecting to the master server. Lets set up replication with the following

CHANGE MASTER TO command executed on a slave server. The CHANGE MASTER TO command adds/changes the parameters that the slave server uses for connecting to the master server. To see the definition of all the fields in this table, please visit our

official documentation.

mysql> change master to master_host='127.0.0.1', master_port=13000 ,

master_user='rpluser', master_password='secret', master_connect_retry=40,

master_retry_count=10, master_ssl=1, master_ssl_verify_server_cert=1,

master_auto_position=1;

Query OK, 0 rows affected, 2 warnings (0.51 sec)

We can now look up the connection parameters set in our table for

replication connection configuration. Lets do a SELECT * on this table to see what we get:

mysql> select * from performance_schema.replication_connection_configuration\G

*************************** 1. row ***************************

HOST: 127.0.0.1

PORT: 13000

USER: rpluser

NETWORK_INTERFACE:

AUTO_POSITION: 1

SSL_ALLOWED: YES

SSL_CA_FILE:

SSL_CA_PATH:

SSL_CERTIFICATE:

SSL_CIPHER:

SSL_KEY:

SSL_VERIFY_SERVER_CERTIFICATE: YES

SSL_CRL_FILE:

SSL_CRL_PATH:

CONNECTION_RETRY_INTERVAL: 40

CONNECTION_RETRY_COUNT: 10

1 row in set (0.00 sec)

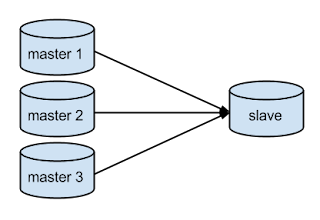

You can see that the changes made by CHANGE MASTER TO command can be seen in this table. These values are preserved here until the next CHANGE MASTER TO or RESET SLAVE ALL command. A row in this table represents a connection between the slave and its master. Since we have only one master here, we see only one row. If MySQL supported multi-source, then this table would have information about multiple sources- one row per source.

Note that a

START SLAVE is not done yet. The CHANGE MASTER TO command makes changes to the internal data structures regarding the connection parameters (if you know the internals, the "active_mi" variable is what I am talking about) and this table just picks up values from this data structure. So, the values shown in the table just reflects the state of the this data structure at the moment the SELECT was done.

Lets now move to the next table "replication_connection_status".

REPLICATION_CONNECTION_STATUS:

This table shows the current status of connection between the slave and its master. Technically, it talks about the IO thread's status. To view the definition of all the fields in this table, please visit our

official documentation. Now, we need a START SLAVE because this command establishes the connection between slave and its master. So, lets execute a START SLAVE/ START SLAVE IO_THREAD command followed by a SELECT* from "replication_connection_status" table.

mysql> start slave; Query OK, 0 rows affected (0.05 sec)

mysql> select * from performance_schema.replication_connection_status\G

*************************** 1. row ***************************

SOURCE_UUID: 1766a057-10cd-11e3-b06a-0021cc72bfc3

THREAD_ID: 2

SERVICE_STATE: ON

RECEIVED_TRANSACTION_SET:

LAST_ERROR_NUMBER: 0

LAST_ERROR_MESSAGE:

LAST_ERROR_TIMESTAMP: 0000-00-00 00:00:00

So, we can see from here that, at the moment SELECT was done:

- The slave was reading from a master with UUID= 1766a057-10cd-11e3-b06a-0021cc72bfc3,

- The thread that handles this connection (IO thread) has an id=2. The thread_id field is very useful if you want to join this table with other Performance Schema/Information Schema tables. As an example, you can join this table with the table performance_schema.threads to extract a lot more information about this connection (IO thread). Likewise, there is a lot more thread-wise statistics you can collect with the other existing Performance Schema tables. Note that there is a bug here but thankfully there is an easy workaround.

- Further, we see that our connection (the IO thread) is ready for service (SERVICE_STATE: ON).

Note that the service state shows one of (ON/OFF/CONNECTING):

- ON: meaning ready to serve i.e. if there is an event that is executed on master, you can assume that it will be read by the slave via this connection.

- OFF: meaning the connection (IO thread) is not serving i.e. it is not ready to read from the master or killed.

- CONNECTING: meaning the connection (IO thread)is not established yet but the slave is trying to connect.

It is important to note here that the names and semantics of thread_id and service_state is extended to all the status tables and they all mean exactly the same. Hence, going forward lets skip explaining these two columns in the following tables.

Lets now execute a couple of queries on the master server and see if we can get the set of received transactions on our slave server.

mysql>create table test.t(a int primary key); Query OK, 0 rows affected (0.61 sec)

mysql>insert into test.t values(1); Query OK, 1 row affected (0.06 sec)

Lets turn back to our slave server and see what the field "received_transaction_set" has.

mysql> select received_transaction_set from performance_schema.replication_connection_status\G

*************************** 1. row ***************************

RECEIVED_TRANSACTION_SET: 1766a057-10cd-11e3-b06a-0021cc72bfc3:1-4

1 row in set (0.00 sec)

Lets now cause an error in the connection (IO thread) and look at the error related fields in this table. To cause an error in the connection, we will provide a wrong password in our CHANGE MASTER TO command.

mysql>stop slave; Query OK, 0 rows affected (0.10 sec)mysql>change master to master_password='abcd'; Query OK, 0 rows affected, 2 warnings (0.29 sec)

mysql> start slave;Query OK, 0 rows affected (0.05 sec)

mysql> select last_error_number, last_error_message, last_error_timestamp from

performance_schema.replication_connection_status\G

*************************** 1. row ***************************

LAST_ERROR_NUMBER: 1045

LAST_ERROR_MESSAGE: error connecting to master 'rpluser@127.0.0.1:13000' - retry-time: 40 retries: 2

LAST_ERROR_TIMESTAMP: 0000-00-00

1 row in set (0.00 sec)

The names and semantics of the error related fields(number, message and timestamp) are again exactly same across all the tables, wherever applicable. Hence, going ahead, lets skip monitoring error related fields in the following tables.

Lets now clean-up the error we caused to move ahead and work with other tables.

mysql> stop slave; Query OK, 0 rows affected (0.06 sec)mysql> change master to master_password='secret'; Query OK, 0 rows affected, 2 warnings (0.25 sec)mysql> start slave; Query OK, 0 rows affected (0.08 sec)

mysql> select last_error_number, last_error_message, last_error_timestamp from

performance_schema.replication_connection_status\G

*************************** 1. row ***************************

LAST_ERROR_NUMBER: 0

LAST_ERROR_MESSAGE:

LAST_ERROR_TIMESTAMP: 0000-00-00

1 row in set (0.00 sec)

Great! We are done with monitoring the connection specific parameters between a slave and its master. Lets now move to the other half of replication module- execution of events received from the master on the slave server.

REPLICATION_EXECUTE_CONFIGURATION/STATUS:

This table shows the configuration parameters that affect execution of transactions by the slave server. Parameters stored in the table can be changed with the CHANGE MASTER TO command. Lets setup a delayed replication now and see how the same can be read from the tables

replication execute configuration.

mysql> stop slave; Query OK, 0 rows affected (0.11 sec)mysql> change master to master_delay=150; Query OK, 0 rows affected (0.29 sec)mysql> start slave; Query OK, 0 rows affected (0.06 sec)

mysql> select desired_delay from performance_schema.replication_execute_configuration\G

*************************** 1. row ***************************

DESIRED_DELAY: 150

1 row in set (0.00 sec)

Lets now execute an event at our master to see if the event is executed at the slave or not.

mysql> insert into test.t values(1); Query OK, 1 row affected (0.06 sec)

Lets move to slave and make sure our slave has not elapsed the 150 second delay yet.

mysql> select remaining_delay from performance_schema.replication_execute_status\G

*************************** 1. row ***************************

REMAINING_DELAY: 147

1 row in set (0.00 sec)

Now we should be able to see that although the insert(2) is received at slave it is not executed yet. The last time we saw our received_transaction_set (before insert(2)), it was 1766a057-10cd-11e3-b06a-0021cc72bfc3:1-4. So insert(2) should be assigned a GTID 1766a057-10cd-11e3-b06a-0021cc72bfc3:5.

mysql> select received_transaction_set from performance_schema.replication_connection_status\G

*************************** 1. row ***************************

RECEIVED_TRANSACTION_SET: 1766a057-10cd-11e3-b06a-0021cc72bfc3:5

1 row in set (0.00 sec)

Ok, we have received the event. Now lets see if this insert(2) with GTID 1766a057-10cd-11e3-b06a-0021cc72bfc3:5 is executed yet.

mysql> select @@global.gtid_executed\G

*************************** 1. row ***************************

@@global.gtid_executed: 1766a057-10cd-11e3-b06a-0021cc72bfc3:1-4

1 row in set (0.00 sec)

Lets now wait until, our delay is elapsed.

mysql> select remaining_delay from performance_schema.replication_execute_status\G

*************************** 1. row ***************************

REMAINING_DELAY: 0

1 row in set (0.00 sec)

And check if the insert(2) has been executed.

mysql> select @@global.gtid_executed\G

*************************** 1. row ***************************

@@global.gtid_executed: 1766a057-10cd-11e3-b06a-0021cc72bfc3:1-5

1 row in set (0.00 sec)

Executed. Great!

Before going ahead to have a look at the other goodies in store, lets revise a couple of things:

- SQL THREAD: Executes the events received from the master at slave.

- MULTI-THREADED SLAVE (MTS): With only one thread (SQL thread) reading and applying events that are applied by multiple clients concurrently on the master, the slave starts lagging behind the master. In this case, one can chose to opt for MTS. Simply put, we have a buffer (relaylog) on slave server that stores the events executed on the master server. The coordinator thread reads the relaylog and assigns these to worker threads which execute the events assigned to them parallely on the slave. The coordinator thread is the scheduler and the worker threads are responsible for executing the events.

REPLICATION_EXECUTE_STATUS_BY_COORDINATOR:

This table is used in two ways depending on whether the slave is operating with one applier (MTS disabled) or multiple appliers executing events in parallel(MTS enabled). When operating with one applier, this table reports the status of this applier. When multiple appliers, the same table reports the status of the scheduler (coordinator thread). Lets explore the single applier use case.

mysql> select * from performance_schema.replication_execute_status_by_coordinator\G

*************************** 1. row ***************************

THREAD_ID: 13

SERVICE_STATE: ON

LAST_ERROR_NUMBER: 0

LAST_ERROR_MESSAGE:

LAST_ERROR_TIMESTAMP: 0000-00-00 00:00:00

1 row in set (0.00 sec)

We see that the applier's (SQL thread) id is 13 and it is alive (SERVICE_STATE: ON). For now there are only two states that we show- ON/OFF. The semantics of error fields are exactly similar to the explanations earlier. Hence we skip them here.

The Multiple applier case (MTS enabled) is similar except that this table will show the scheduler's (coordinator) thread id. Hence we will skip the explanations and move to the next table. To read more about this table, please visit our

official documentation for this table.

REPLICATION_EXECUTE_STATUS_BY_WORKER (Single Applier Case):

When the slave server is operating in the single applier mode, lets see what the table replication_execute_status_by_worker shows us. Conceptually, we don't have a scheduler and multiple appliers. so the table should be empty.

mysql> select * from performance_schema.replication_execute_status_by_worker\G

Empty set (0.00 sec)

And it is empty :)

REPLICATION_EXECUTE_STATUS_BY_WORKER (Multiple Appliers Case):

Lets now set up our slave to use multiple appliers (MTS enabled) and then see how we can monitor the replication status concerned with our scheduler and appliers (coordinator and worker threads). Lets call our multiple appliers "worker".

mysql> stop slave; Query OK, 0 rows affected (0.10 sec)

mysql> SET GLOBAL slave_parallel_workers=2; Query OK, 0 rows affected (0.00 sec)

Note that a START SLAVE is necessary to have the workers ready for action. Just setting slave_parallel_workers=2, doesn’t spawn the 2 workers.

mysql> start slave; Query OK, 0 rows affected, 1 warning (0.14 sec)

mysql> select * from performance_schema.replication_execute_status_by_worker\G

*************************** 1. row ***************************

WORKER_ID: 0

THREAD_ID: 16

SERVICE_STATE: ON

LAST_SEEN_TRANSACTION:

LAST_ERROR_NUMBER: 0

LAST_ERROR_MESSAGE:

LAST_ERROR_TIMESTAMP: 0000-00-00 00:00:00

*************************** 2. row ***************************

WORKER_ID: 1

THREAD_ID: 17

SERVICE_STATE: ON

LAST_SEEN_TRANSACTION:

LAST_ERROR_NUMBER: 0

LAST_ERROR_MESSAGE:

LAST_ERROR_TIMESTAMP: 0000-00-00 00:00:00

2 rows in set (0.00 sec)

So, we see that we have two rows to represent the two workers. The worker_id field here is same as the id field in slave_worker_info table, so you can again join the two tables to know more about a worker thread. The service_state(ON/OFF) has nothing new to add.

The interesting parts here are:

- You get the per worker error. So, if two of your workers have errored out in a group, you can identify both the errors as opposed to only one through SHOW SLAVE STATUS.

- last_seen_transaction is the new field we have added to help detect MTS failures better. This helps you find the GTID of all the transactions being executed by the all the workers at the moment the error happened. These values are preserved in case of an error and in the idle state of the worker after executing the transactions.

To read more about this table and field-wise descriptions, please visit our

official documentation for this table.

CONCLUDING REMARKS:

The goal of this post was to help you familiarize with the Performance Schema tables relating to replication. Please try out this new interface for monitoring MySQL replication and let us know your feedback. To read more on these Performance Schema tables, you can visit the

blog on multisource replication by Rith. These Performance Schema tables look really powerful with multisource replication. The motive behind this feature was to make it easier to monitor the health and performance of MySQL replication. We hope this new interface to monitor MySQL replication makes our user's life easier.